Hello my dear Anglophones!

I’m going to create some generic internet banter for you:

Person 1

– Look here at the differences between American English and British English, crazy stuff! (with the addition of some image or list)

Person 2

– *Something along the lines of*:

Person 3

– *Something along the lines of*:

Person 4, referring to the ‘u’-spellings in British English (colour, favour, etc.):

Then, usually, person 5 comes along with something like:

Person 5, let’s call them Taylor, has read somewhere that the American English accent shares more features with English as it was spoken in the 17th century, when America was settled by the British, and therefore argues that American English is more purely English than British English is. Taylor’s British friend, Leslie, may also join the conversation with something like “America retained the language we gave them, and we changed ours.”1

In this post, I will try to unpack this argument:

Is American English really a preserved Early Modern English accent?2

Firstly, however, I want to stress that one big flaw to this argument is that American English being more similar to an older version of English doesn’t mean it’s any better or purer than another English variety – languages change and evolve organically and inevitably. (We have written several posts on the subject of prescriptivism, resistance to language change, and the idea that some varieties are better than others, for example here, here and here.)

Now that we’ve got that out of the way, let’s get to the matter at hand. The main argument for why American English would be more like an early form of English is that it is modelled on the language of the first English-speaking settlers, which in the 17th century would be Early Modern English (EModE, i.e., the language of Shakespeare). In fact, there is some truth in that features of EModE are found in American English, while they’ve changed in (Southern) British English, such as:

- Pronouncing /r/ in coda position, i.e. in words like farm and bar.

This feature is called rhoticity, if an accent pronounces these /r/’s it is called a rhotic accent. - Pronouncing the /a/ in bath the same as the /a/ in trap, rather than pronouncing it like the /a/ in father which is what we usually associate with British English.

- Using gotten as a past participle, as in “Leslie has gotten carried away with their argumentation”.

- Some vocabulary, such as fall (meaning autumn), or mad (meaning angry).

- The <u>-less spelling of color-like words.

So far Taylor does seem to have a strong case, but, of course, things are never this simple. Famously, immigration to America did not stop after the 17th century (shocker, I know), and as the British English language continued to evolve, newer versions of that language will have reached the shores of America as spoken by hundreds of thousands of British settlers. Furthermore, great numbers of English-speaking migrants were from Ireland, Scotland, and other parts of the British islands which did not speak the version of British English which we associate with the Queen and BBC (we call this accent RP, for Received Pronunciation). Even though the RP accent remained prestigious for some time in America, waves of speakers of other English varieties would soon have outnumbered the few who still aimed to retain this way of speaking. Finally, of course: Taylor not only (seemingly) assumes here that British English is one uniform variety, but also that American English would have no variation – a crucial flaw especially when we talk about phonetics and phonology.

If we look at rhoticity, for example, English accents from Ireland, Scotland and the South-West of England are traditionally rhotic. Some of these accents also traditionally pronounce the /a/ in bath and trap the same. Where settlers from these regions arrived in great numbers, the speech in those regions would have naturally shifted towards the accents of the majority of speakers. Furthermore, there are accents of American English that are not traditionally rhotic, like the New England accent, and various other accents across the East and South-East, such as in New York, Virginia and Georgia. This is to do with which accents were spoken by the larger numbers of settlers there; e.g., large numbers of settlers from the South-East of England, where the accents are non-rhotic, would have impacted the speech of these regions.

Finally, while the /a/ in bath and trap is pronounced the same in American English, it is not the same vowel as is used for these words in, for example, Northern British English. You see, American English went through its very own sound changes, one of these is the Northern Cities Vowel Shift, which affected such vowels as the mentioned /a/ so that it became pronounced more ‘ey-a’ in words such as man, bath, have, and so on. Also, let’s not forget that American English also carries influences from all the other languages that have played a part, to a lesser or larger extent, in settling the North American continent from Early Modern times until today, including but not limited to: French, Italian, Spanish, German, Slavic languages, Chinese, Yiddish, Arabic, Scandinavian languages, and Native American languages.

In sum, while American English has some retention of features from EModE which have changed in British English, the flaws of Taylor’s, and Leslie’s, argument are many:

- Older isn’t necessarily better

- Large numbers of English speakers of various dialects migrated to America during centuries after the original settlers, their speech making up the beautiful blend we find today’s American English accents.

- British English was not the only language involved in the making of American English!

- British English is varied, some accents still retain the features which are said to be evidence of American English being more “original”, such as rhoticity and pronouncing the /a/ in ‘trap’ and ‘bath’ the same. American English is also varied, and the most dominant input variety in different regions can still be heard in the regional American accents, such as the lack of rhoticity in some Eastern and Southern dialects.

In sum: Let’s not assume that a language is uniform. - American English underwent their very own changes, which makes it just as innovative as British English.

- No living language is static, Leslie, so your argument that American English never changed is severely flawed.

So the next time you encounter some Taylors or Leslies online, you’ll know what to say! And, of course, let’s not forget what the speakers of both British and American English have in common in these discussions – for example, forgetting that these are not the only types of English in the world.

More on this in a future blog post!

Footnotes

1This is actually a direct quote from this forum thread, read at your own risk: https://forums.digitalspy.com/discussion/1818966/is-american-english-in-fact-closer-to-true-english-than-british-english

2A lot of the material used for this post is based on Dr. Claire Cowie’s material for the course LEL2C: English in Time and Space at the University of Edinburgh.

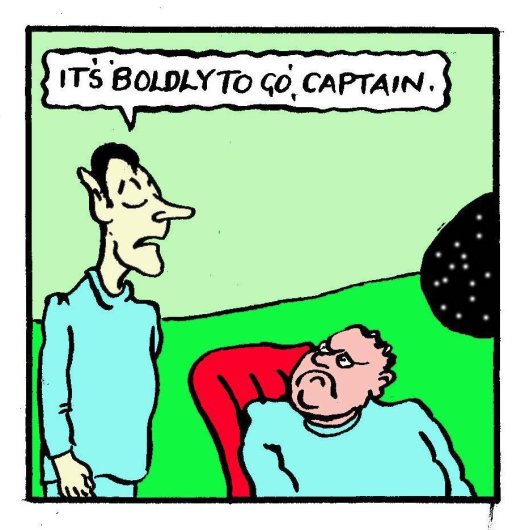

Or rather, “To go boldly”1

Or rather, “To go boldly”1